Automated AI Inventory Forecasting: Python & Prophet

Built a robust ETL and forecasting pipeline using Python, SQLite, and Meta's Prophet AI to automate replenishment for ~7,800 SKUs. Reduced the complex supply chain planning cycle from days to hours.

🚀 Executive Summary

The Problem: Managing inventory replenishment for approximately 7,800 SKUs across 4 diverse retail branches was a convoluted, manual nightmare. It involved crashing Excel spreadsheets, processing massive XML exports (1.8GB) from Tally ERP, and relying entirely on subjective human guesswork leading to severe stock imbalances. The Solution: I engineered a custom, fully automated Python-based ETL (Extract, Transform, Load) and Forecasting engine leveraging Meta’s Prophet algorithm for time-series predictions and SQLite for robust data persistence. The Impact: The system successfully slashed the weekly replenishment planning cycle from several days of tedious data manipulation to just ~4.5 hours of automated processing. It introduced strict, objective, data-driven ordering logic, drastically reducing dead stock capital and preventing fast-mover stockouts.

1. The Challenge: Big Data vs. Manual Processes

Prior to this intervention, the supply chain department was trapped in an untenable cycle. Every week, staff had to manually parse years of historical transaction data exported from the legacy ERP (Tally Prime).

This manual approach was rapidly breaking down:

- System Limitations: Excel frequently crashed when attempting to handle the 3-year historical dataset, which weighed in at nearly 1.8 GB of raw XML data.

- Data Corruption: The raw XML exports were notorious for missing tags, malformed nodes, and inconsistent character encodings (often a mix of UTF-8 and UTF-16) which broke standard import scripts.

- Human Error & Bias: Lacking a scientific forecasting method, order quantities were largely based on “gut feeling” by branch managers. This inevitably led to massive overstocking of slow-moving items (tying up vital capital) and fatal stockouts of high-velocity “A-Class” items.

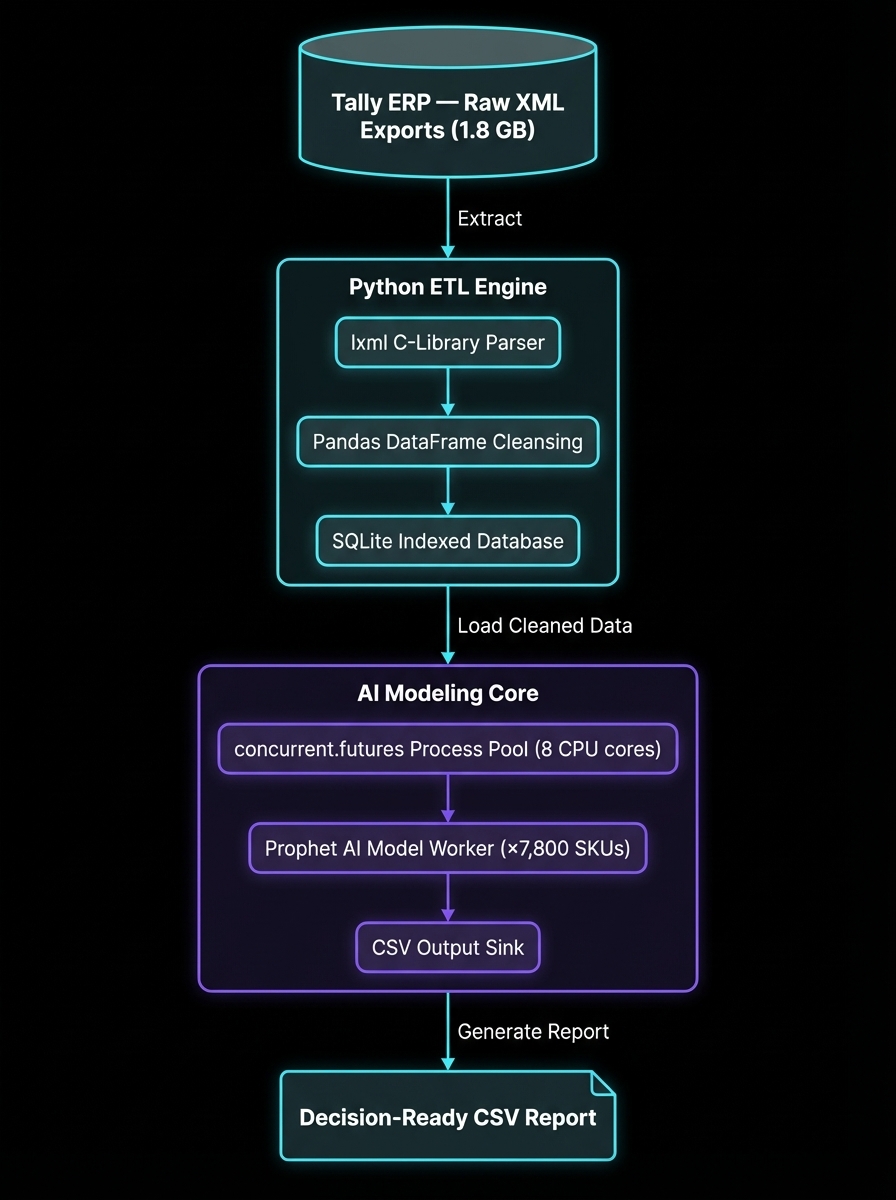

2. System Architecture: The Automated Pipeline

I architected a custom, headless Python application designed to handle the full data lifecycle autonomously: Ingest -> Sanitize -> Model -> Forecast -> Report.

Architecture flow: Heavy XML Export → lxml parsing → Pandas dataframe sanitization → SQLite indexed storage → Multiprocessed Prophet AI modeling → Final Actionable CSV.

Key Technical Subsystems

- High-Speed ETL Engine: Utilized the

lxmlC-library wrapper for hyper-fast algorithmic parsing of massive XMLs. Implemented custom exception handling to gracefully bypass corrupted XML nodes without halting the entire multi-gigabyte ingestion process. - Prophet AI Forecasting: Employs Facebook’s (Meta) Prophet library to generate independent, highly accurate time-series models for over 7,500 individual items. Crucially, the model is configured to explicitly account for weekly seasonality and dramatic demand spikes caused by specific UAE and Oman national holidays (e.g., Eid surges).

- Dynamic ABC Classification: Automatically segments inventory (e.g., “A - Ultra-Fast Moving”, “B - Steady”, “C - Dead Stock”) based on configurable velocity thresholds stored in an external

settings.pyfile. This allows management to tweak business logic without touching complex application code.

3. The Interface: Generated Output

While inherently a backend tool, the output is highly visible and impactful.

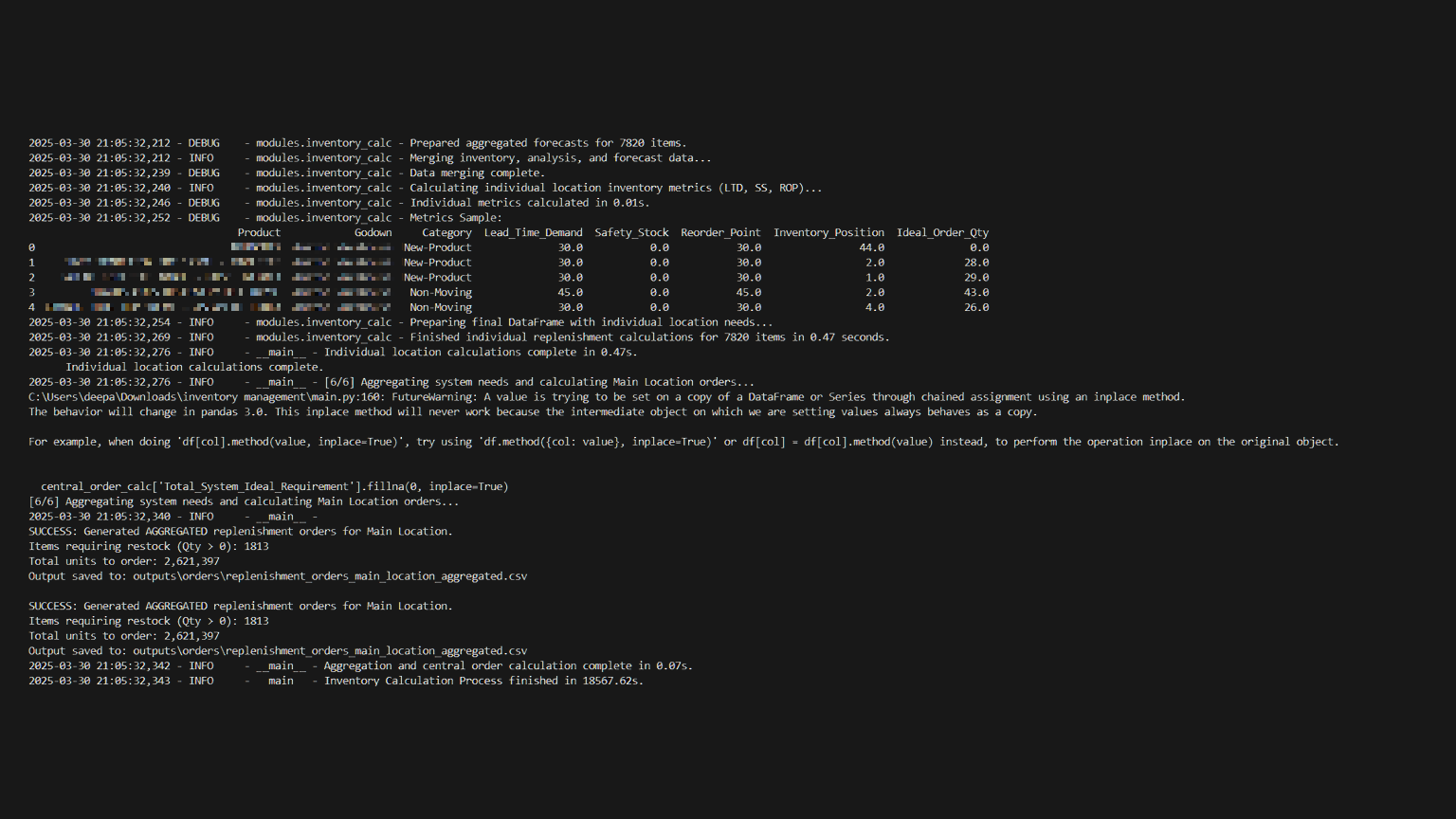

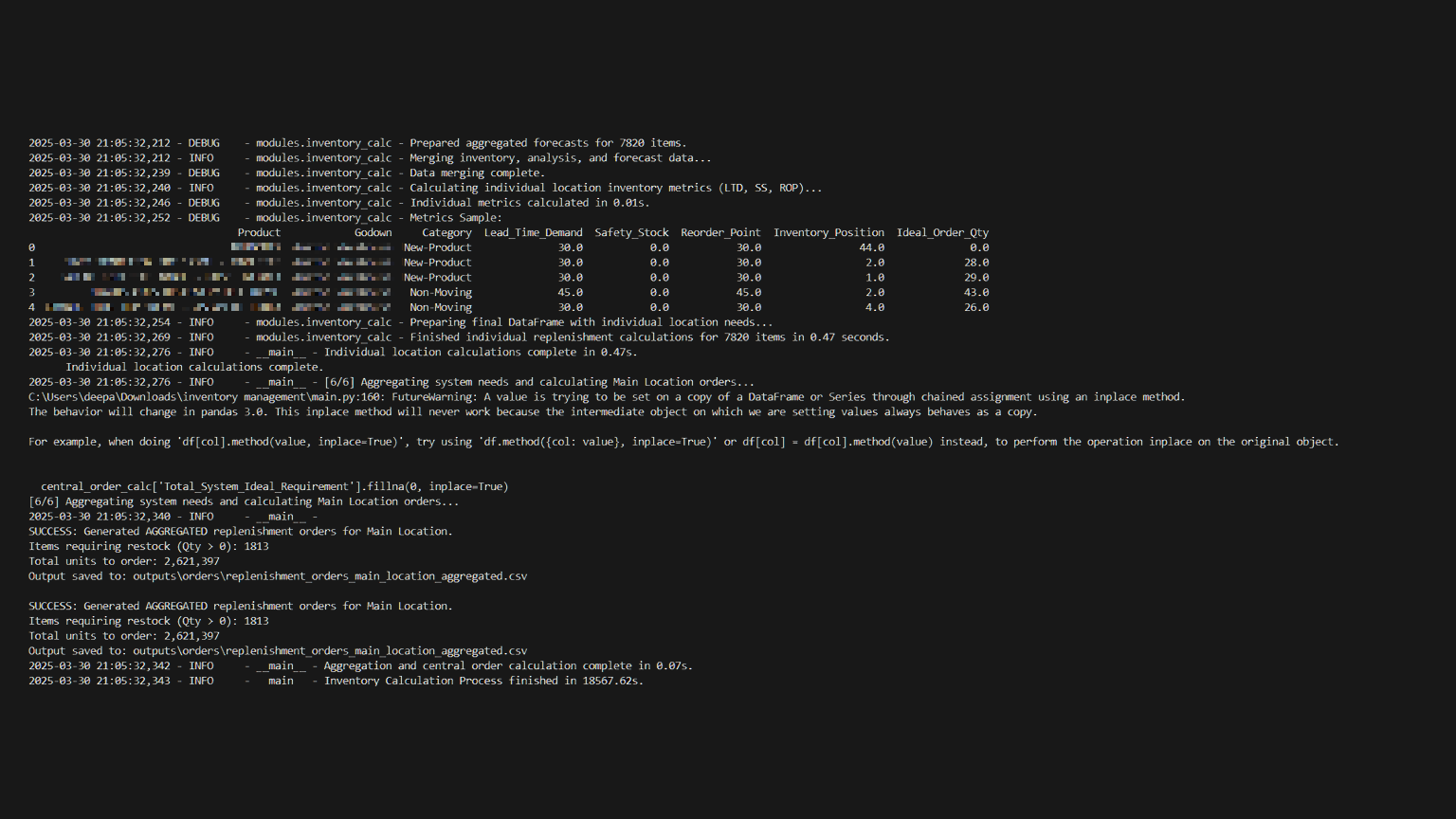

The Python console output displaying the multi-threaded processing initialization and progress tracking.

The Python console output displaying the multi-threaded processing initialization and progress tracking.

The system condenses millions of rows of history into a single, decision-ready CSV report. Here is a structural representation of the generated business logic:

SKU_Code,Product_Name,Category,Current_Stock,Safety_Stock,Forecast_Next_30d,Recommended_Reorder_Qty

"104882","L'Oreal Hyaluron Serum 50ml","Fast-Moving",120,50,450.5,381

"99231","Nivea Soft Cream 300ml","Steady",85,20,60.2,0

"44391","Discontinued Brand X Toner","Dead-Stock",200,0,0,04. Engineering Challenges & Solutions

Challenge 1: The “Malformed XML Nightmare”

Tally ERP generates legacy XML files (often 500MB+ per file) riddled with inconsistent tags, unescaped characters, and unpredictable UTF-16 encoding errors that caused standard Python decoders to throw continuous UnicodeDecodeErrors.

The Engineering Fix:

I bypassed standard standard library tools and wrote a hardened, custom parser using lxml.etree.iterparse(). This allowed the script to stream the file piece-by-piece from disk rather than loading 1.8GB into RAM simultaneously (which previously caused MemoryError crashes on standard office PCs). I combined this with a generic text pre-processor script that mapped and forcefully stripped invalid Unicode characters before passing the stream to the XML parser.

Challenge 2: Severe Performance Bottlenecks

Generating individual forecasting models is computationally expensive. Running the Prophet algorithms sequentially in a standard for loop across 7,800 items on a standard desktop CPU was projected to take over 18 hours.

The Engineering Fix: I dramatically refactored the application architecture to utilize Multiprocessing Parallelism.

- I implemented Python’s

concurrent.futures.ProcessPoolExecutor. - Instead of processing one item at a time, the system divides the 7,800 SKUs into batches and spawns multiple sub-processes mapped directly to the number of physical CPU cores available on the host machine.

- Result: By achieving near 100% CPU utilization across 8 cores simultaneously, the total processing runtime collapsed from ~18 hours to a highly manageable ~4.5 hours, allowing the reports to be generated easily overnight.

5. Final Outcome & Business Value

This software project fundamentally transformed the company’s supply chain operations, replacing “gut feeling” with relentless, data-driven optimization policy.

- Extreme Efficiency: Completely automated the grueling weekend work of manual data cleaning and consolidation, saving dozens of human-hours per month.

- Capital Optimization: By accurately identifying and forecasting items, purchasing budgets were dynamically re-allocated. Capital previously trapped in “Dead Stock” was diverted automatically to fund “Fast-Moving” essentials, boosting overall revenue.

- Scalability Proven: The robust

lxmlandSQLitefoundation proved inherently capable of absorbing compounding years of historical gigabyte-scale data without succumbing to the memory crashes that plagued the legacy Excel workflow.